China has warned the United States against allowing artificial intelligence systems to make life and death decisions on the battlefield, raising concerns about the ethical and strategic risks of expanding military use of advanced algorithms. The warning was delivered by Chinese defence ministry spokesman Jiang Bin during a regular briefing in Beijing, where he emphasized that AI should not be granted the authority to determine lethal outcomes in armed conflict. Chinese officials said maintaining human control over military decision making is essential to prevent the erosion of accountability and ethical standards in modern warfare.

Jiang Bin cautioned that the unrestricted application of AI technology in military operations could lead to unpredictable consequences and undermine existing international norms governing armed conflict. He warned that excessive reliance on algorithms could weaken human oversight and potentially allow machines to influence critical battlefield decisions. Chinese officials said such developments could create what they described as a dangerous technological trajectory if governments fail to establish clear limits on how artificial intelligence systems are deployed in combat environments.

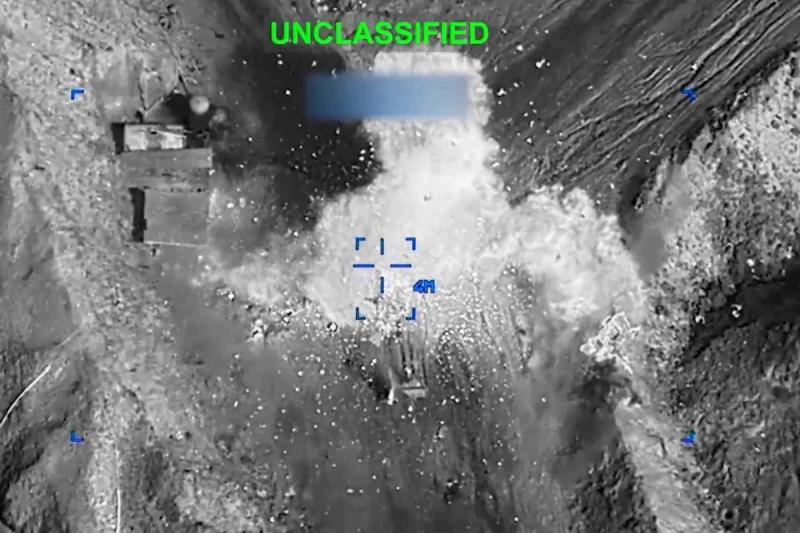

The remarks come amid growing debate over how the United States military plans to integrate artificial intelligence into its defense operations. Reports have suggested that Washington has been encouraging technology companies to permit broader military use of their AI systems and has already deployed AI assisted tools in operations related to conflicts involving Iran and Venezuela. The increasing role of artificial intelligence in military planning, intelligence analysis and operational decision making has intensified global discussions about the risks associated with autonomous or semi autonomous weapons systems.

The issue has also been highlighted by an ongoing dispute between the Pentagon and artificial intelligence company Anthropic regarding the military use of its technology. The conflict centers on the company’s refusal to remove safeguards that prevent its AI systems from being used in autonomous weapons or mass surveillance programs. The Pentagon has since designated the company a supply chain risk, limiting the use of its technology in defense contracts and sparking legal challenges that could shape future cooperation between the military and private AI developers.

Chinese officials argue that allowing machines to influence lethal decisions could undermine international humanitarian law and increase the risk of unintended escalation during conflicts. Beijing has repeatedly called for maintaining human primacy in military applications of artificial intelligence and advocates for global guidelines that ensure AI technologies are used responsibly. Officials said that all weapon systems incorporating AI should remain under direct human supervision to preserve accountability and prevent automated systems from making irreversible decisions on the battlefield.

Analysts say the debate reflects broader geopolitical competition as major powers accelerate investments in military AI technologies. Artificial intelligence is increasingly being integrated into defense systems for tasks such as intelligence processing, target recognition and battlefield data analysis. However experts caution that current AI models still face reliability and safety limitations, particularly in complex combat environments where mistakes could have serious consequences.

The warning from Beijing underscores the growing international concern over the militarization of artificial intelligence as countries race to develop advanced defense technologies. Governments and researchers are now debating how to balance technological innovation with ethical safeguards that can prevent misuse of AI in warfare. As global military powers expand their use of intelligent systems, discussions about regulation and oversight are expected to become a central issue in future security and arms control negotiations.